Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

I figured you'd click on the blue up-arrow next to the quote which will take you to the relevant post (so i don't spam the thread again).

Duh, got it!! Will check it out.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

But every time you write down a number - whether to paper; or to memory - you are handling a representation.

Of course. But the representation is not the number.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

You are mentioning the number. In the language of C - you have a pointer.

Yes exactly. A representation is a pointer to the abstract concept it points to. 2 + 2 and 4 and 3.999... are distinct representations that point to the abstract number we call 4. Just as "justice" is a word that points to the abstract idea of justice, a thing we call care about and that is highly imperfectly implemented in the real world.

It's not only in math that we use abstractions as perfected examples of the imperfect things of our world, right? To be human is to abstract.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

So you could (metaphorically) think of a number as "an address on the ticker tape of a Turing machine", but it gets stupidly recursive - how do you represent the address?

Of course TMs can represent numbers. I don't see what your problem is. In computer memory if I have an address that points to a bit pattern that represents a number, so what? I hope this isn't confusing you in any way. What is your concern with a chain of a dozen pointers that end up pointing to a memory location that holds a bit pattern that represents a number? So what?

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

And yes - I am blurring lines between countable/uncountable infinities so my metaphor works.

This remark doesn't make any sense at all. What do countable and uncountable infinities have to do with anything? Except to note that TMs can only represent at most countably many numbers. Most real numbers are not computable.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

It doesn't matter! It's just a label. Definitions are irrelevant. Empirical measurements matter.

See I don't know what you mean here. Of course measurement matters. When an engineer is building a bridge I don't care if she knows abstract set theory, only that she knows how to build bridges. But that doesn't invalidate set theory. It's two different things. Engineering is about the physical world and abstract math is not. Surely you are not confused on this point either. So what is your point? That's what I don't understand? So what if bridge building isn't set theory? SO WHAT?? Why do you go on about it?

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

In 2020 we have arbitrary-precision software libraries.

But of course they are actually no such thing, even if they're called that. All physical machines are limited by time, space, and energy. So we can never arbitrarily represent all the real numbers, or even, in the age of the universe, all the positive integers. Computers are finite.

All arbitrary precision means is that you can have as many decimal places of accuracy as you like, subject to the physical limitations of the computer. You can NOT approximate every real number for the simple reason that your computer is finite. Again, surely you know that.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

Having an algorithm that produces the N-th digit of an irrational number is still an issue of tractability if the value of N pushes you beyond the limits of physics.

Of course. Is this not obvious to you? Is it not obvious to you that it's obvious to me?

Turing made the definition he did because he understood all this. He wrote down an idealized, abstract representation of a machine that computes but is not bound by physical limitations.

Is this not perfectly obvious? Why do you keep belaboring the point? That's what I don't get.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

It took Google 12 days to calculate 31 trillion digits. How many do you want/need?

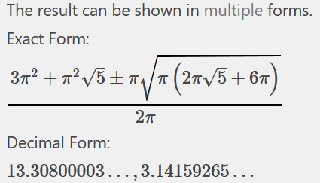

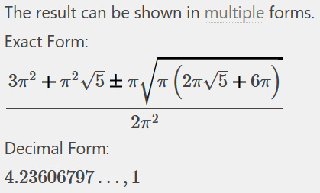

To compute the circumference of the universe? About 6 decimal digits. Or 30. I forget the number but it's relatively small.

To express the entire decimal representation of pi? You need all of them.

But pi is not a good example, because there are many finite-symbol closed-form expressions for pi.

http://mathworld.wolfram.com/PiFormulas.html

But why are you going on about this? It's perfectly clear that we can crank out as many digits of pi as we want,

ignoring resource constraints. That's Turing's definition of computability.

And since the universe is finite and computations take time, space, and energy, we can only crank out finitely many digits of pi in the age of the universe.

WHO THE HECK THINKS ANYONE IS DISPUTING THIS, or not understanding it?

It's two different things. What we can do in the physical world, and what we can do with abstract computations having unbounded resources. Do you not understand this? Do you have to explain it to me, to yourself? I'm constantly confused by where you're coming from. You are stating the most obvious, commonplace, well-known simplicities, as if they were ... what? Revelations? Talking points? What? Tell me.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

If you need more than the computational resources of the universe - then it's not computable.

If you need less than the computational resources of the universe - then it's computable.

No. Turing-computable is Turing computable, and Skepdick-computable is Skepdick-computable. You do yourself no favors by insisting that everyone else in the entire world is using the wrong definition. You can call coffee tea, but if you ask for tea at the diner they're going to give you coffee. You can't start arguing with them that YOU use the word tea to mean coffee.

In computer science, the word computable means exactly what Turing said it meant in 1936. Nobody's changed it since then and nobody's found a better idea; that is, a definition that encompasses computations that Turing would not recognize as such.

Even quantum computing has the SAME power as classical computation. It runs more efficiently on some problems (as far as we know, but not proven); but in terms of computability, it's the same.

Why are you calling coffee, tea? Computable has a standard meaning and by insisting that YOUR definition is right any the standard one is wrong, you embarrass yourself. A definition can't be wrong. If you want to talk about the limits of practical computation, call it "practically computable" or Skepdick-computable and we can have a conversation. Otherwise you're tedious.

Namedropping some philosophical shit while

acting like a crank is a waste of time.

As long as you insist on changing a common definition and then arguing with people about it, you're a crank. Your basic ideas are fine but the way you come across is very cranky.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

Abstract models are useful lenses for understanding patterns in the real world. How else do we "understand?" if we don't have a model for understanding.

Wow deep man.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

Yes! Representation! Floating points are approximations of reals. They are not reals.

Floats represent reals in the sense that all floats are rational numbers. That's as far as it goes. And you can't represent ALL rationals, because your computing equipment has bounded resources. There are real numbers you can NOT approximate with floating point arithmetic running on physical hardware. It would be helpful for you to understand this point.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pmBut numbers don't exist. So who cares?

As long as you don't want to do physics or biology, I suppose we don't care. How much civilization are you willing to abandon to stick to your position, whatever it is? Abstractions DO exist in the world. Justice, law, traffic lights, property. We could not run our lives without abstractions. Math consists of abstractions that often do have impact in our lives.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

wtf wrote: ↑Tue Feb 11, 2020 12:48 am

Isn't computer programming pretty much the canonical example of symbolic computation?

Not according to Programming Language Theory. Symbolic is just one of the many paradigms for expressing computations.

Very odd response. Computer programming is not an example of symbolic computation? You would deny this? Either by deliberately mis-parsing it, or by stating an obvious falsehood? You say programming ISN"T symbolic computation? Wow. News to me.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

That's why I keep telling you that Mathematics is just a language. Like Python, or Haskell, or C - it's notation.

You "keep telling me" things that are perfectly obvious. Why do you do that?

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

I come from a place where computation is broader than that.

Than what, you lost me on that.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

It's empirical/practical. Space and Time complexity are practical concern.

Of course. All you're doing in all of this is talking about the different between computer science and software engineering. Why do you think this is news to anyone?

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

Yeah. It is. When Mathematics eventually catches on to proving theorems on computers, you'll figure out it's all just knowledge representation and organization.

Then we'll all be as smart as you? You're being a crank again. You're right and everyone else is wrong.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

Only thing different is your problem-space concerns itself with abstract problems.

Ok. What of it?

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

Imperative programming: telling the “machine” how to do something, and as a result what you want to happen will happen.

Declarative programming: telling the “machine” what you would like to happen, and let the computer figure out how to do it.

Haskell is declarative.

Python is imperative.

Mathematics is declarative. You are happy to write f(x,y) = z and skip the 10 pages in between - the procedure/algorithm to obtain the result.

Oh I see. So what?

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

My thing is straddling the invented/discovered fence. How is it, that different parts of society go to their own corners, develop their own formal theories about their own specialised interests and all of them come out with the same results (alas - different notations)?

Ok cool. When you're explaining this stuff to me, could you do me a favor and simply use standard terminology for things that have standard definitions? Like computable, which has a particular universally-understood meaning in computer science and math. If you want to define Skepdick-computability that would be much more clear in terms of communication. You can see that, right?

Besides, Skepdick-computability keeps changing. It's a function of how fast our hardware is and how clever our algorithms are. Whereas Turing-computability is eternal. It doesn't change over time.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

What is "IT" that we all keep discovering/observing?

What is reality, man? I hear ya.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

I have no use for the language of "believing in". I don't care ice hockey, but I believe in it.

You don't care for computer science, but you also have some kind of psychological block on believing in it or using its standard terminology.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

I am saying that if the abstract world is some place "elsewhere" yet we keep finding the same patterns, that sure meets the empirical bar for "reproducibility".

Meaning what? I can't connect your personal philosophy with your technical points.

Skepdick wrote: ↑Tue Feb 11, 2020 10:52 pm

The question that nags me is: what is "IT" that we are observing/describing with formal languages?

Abstract mathematical objects. Just as "larceny" refers to an abstract class of behaviors deemed against the law by the legal profession. Just as genes were an abstraction developed to explain the inheritability of various biological traits. Just as quarks are an abstraction in physics.

Have you notice that humans have the power of abstraction? And that our entire civilization depends on it? Math and computer science are highly formalized examples. But when you stop at a red light and go at the green, you are reifying abstractions. Assigning meaning to colors that inherently have no meaning. Yet your life depends on doing it the same way everyone else does. So it's real. Abstractions are real things. What sort of things? Abstract things.

https://plato.stanford.edu/entries/abstract-objects/